Be sure to check out the videos at the end!

When we build a system to control a robot, we're trying to come up with a mapping from the things that a human knows how to do to the things that the robot knows how to do. Typically, these two sets of things are extremely different (which is often why we built the robot in the first place)! Interfaces bridge the gap by converting things that a human can do into things that a robot can do. Good interfaces make the conversion intuitive for a human. Most interfaces are not good interfaces.

To be fair, sometimes it's really hard to map the disappointing small number of easily recognizable human actions to the myriad capabilities of a sophisticated robot. A joystick makes it pretty easy to control a two-degree-of-freedom wheeled robot that can move forward/back and left/right, but what is it like to control a six-degree-of-freedom arm with a joystick?

It's awful. Seriously, this is never going to be a pleasant experience.

As evidence, I offer you the Kinova Jaco Robotic arm. The Jaco is an awesome robot. It's fast, quiet, extremely safe, and reasonably easy to program (although its motion planning API needs some improvement). It was originally created as an assistive device to be mounted on a wheelchair but Kinova also sells it to research labs like mine. We bought a Jaco because it's a reasonable analogue for Robonaut 2 and Valkyrie.

Alright folks, I'm about to levy some harsh criticism on the control system for Jaco, a device of noble purpose that has had a positive impact on many lives. In all fairness to Kinova, they had to build a low cost, highly durable controller that could be used by a specific population of users with significant disabilities. While I believe (possibly incorrectly) they could have done better, their options were extremely limited. I applaud them for using their talents to improve lives.

Everybody got that?

Ok, this interface is not good.

Jaco comes with a three degree-of-freedom joystick (forward/back, left/right, and twist left/twist right) that includes seven additional buttons, only two of which are clearly labeled. Those three axes and seven buttons combinatorially intertwine to produce a perfect gordian knot of inoperability. Here's just a taste: the Jaco robotic arm has ELEVEN operating modes that uniquely map motions of the joystick to different motions of the arm. What mode you're in is communicated via four mysterious, unlabeled blue lights (the astute reader will note that 4 < 11). You can switch between modes with the unlabeled buttons, at least most of the time, and when you can't it is for inscrutable reasons. The end result of this interface is that even after many hours of controlling Jaco with the joystick and studying the rather lengthy user manual, I often have absolutely no idea what is going to happen when I touch the joystick. While rather entertaining, this as a rule is not a good place to begin when taking control of an expensive machine.

As a result, I'm reduced to randomly pushing the joystick in a few directions to see which way the arm moves in whatever mode I'm in and then, assuming it's a suitable mode, trying to remember that mapping until I have to switch to another mode (sometimes successfully) to accomplish something else. It's like trying to play a song on a piano that has random notes assigned to the keys and which scrambles the keys around every now and then. A visitor to my lab has no hope of controlling the arm without a great deal of coaching (though it's fun to watch them try). Plus, this whole approach depends on being able to directly observe the robot as you control it, something that's hard to do if it is outside the space station and you aren't!

My team decided to build an interface that would make controlling the robot arm as easy as controlling your own arm. Put simply, we decided to make our arm the joystick.

We've worked with the first-generation Kinect for many years in my lab - we actually started working with it before its release - but this was the first thing we built using the new Kinect. That meant that the first day was spent mostly in coordinate system conversion hell. The creators of Unity3D (our graphics engine of choice) made many amazing choices, but for committing the sin of basing Unity on a left-handed coordinate system I hereby sentence them to 4 hours of swapping and negating axes while trying different euler angle rotation orders, times the number of Unity developers in the world-- a rather unfortunate penance of 912 years. Garrett has gotten pretty good at this process, which is another way of saying that he's doomed himself to being involved every time we have to do this, which is surprisingly often because every single piece of equipment we work with uses a right-handed coordinate system.

We also couldn't directly interface with the Kinect's or Jaco's API directly because of the fact that Unity is based on Mono, which is exactly like .NET except for when it would be a huge pain for it not to be. We've learned that it's best not to even try to consume a .NET API in Unity, even if it should work. That way lies hours of rage followed by a dejected retreat to where you should have started - just putting everything behind a web-socketed server. If it's all on the same network, the latency usually isn't that bad and this approach has the additional bonus of making it easy to use these devices from any of our lab computers without having to install drivers or even rewire things.

Kinect gives us the 3D location of the joints in your arm, so in theory we could try to use those to directly position the joints of Jaco. It turns out that's not a good idea-- Jaco is less capable than a human arm and its joints aren't even in the same spots. Instead, we just tell Jaco to position its hand at the same position and orientation as the human's hand (in its own frame of reference) and ignore what the rest of the arm does to get it there. This means that the only joint positions we really need from Kinect to control Jaco were the hand and fingertip.

We didn't want to stop there, though, because waving your arm around while staring at a rendered arm on a computer screen isn't what you'd call natural (though again, it's entertaining for others to watch). Like with the joystick, it can be a bit hard to know which way you need to move your arm to trigger the desired motion of the robot arm. It certainly doesn't feel like the arm on the screen is your arm.

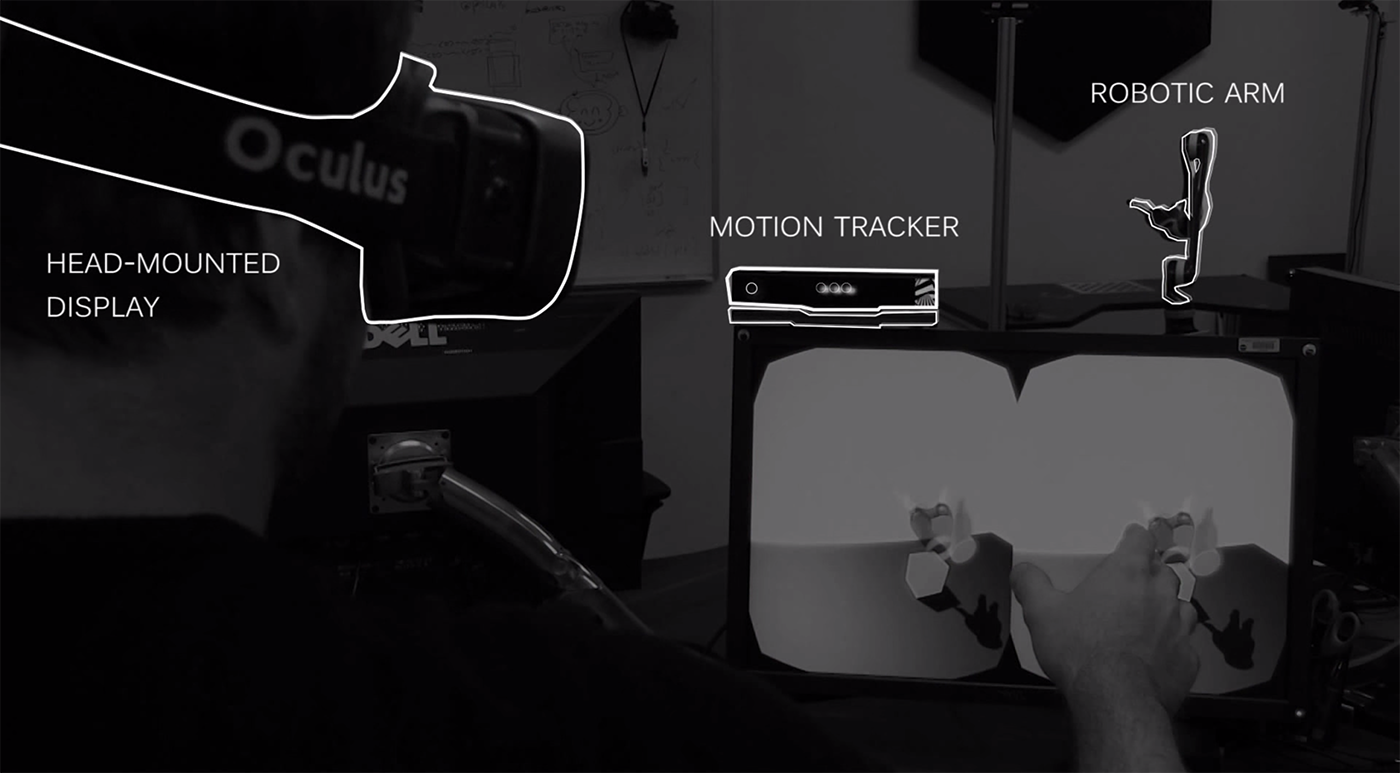

This is really just a problem of perspective, and we solved it with the Oculus Rift head-mounted display. We grabbed one more bit of information from the Kinect - the user's head position - and used it to control the point of view of the Rift in the Unity3D scene. Later, we also filled the scene with sensor data acquired from a Primesense 3D sensor located near the robot so we could see a live 3D model of surroundings of the robot.

You're probably wondering if we could possibly add any more gadgets into this system (there might be room for a Clapper in here somewhere), but we didn't need to. We had crossed a threshold into something remarkable. I have built numerous systems to control different types of robots and there's a delightful and highly addictive *click* when we finally arrive at something that feels right. With the Rift on, we felt a sense of presence in the robot's environment. On top of that, in Alex's words, "the robot arm started feeling like an extension of my own arm". I won't claim that it actually felt like my own arm, because there was no haptic feedback. Additionally, Jaco can't move as quickly as my arm and so it was often playing "catch up". Even with these limitations, a visitor to my lab can immediately take control of the arm with zero training and usually pick a block up off a table after a minute or two. Furthermore, you don't even need to be in the same zipcode as the robot. Have a look at the video below to see the system in action!

I've mentioned latency a few times without mentioning the pesky speed-of-light problem that we have to deal with when controlling space robots from Earth. It's not that I forgot about it -- believe me, this isn't something that people in my line of work forget about. It's just that we are targeting this work on low latency operational scenarios like an astronaut inside the space station controlling a robot outside the space station.

Controlling a humanoid robot arm with a human arm isn't too big of a stretch, but how far can we take this approach? Around the same time as our work with the Oculus and Kinect, we performed a series of experiments with the Leap Motion hand tracker. One of the craziest (and coolest) things we did with it is use it to control a giant six-legged robot called ATHLETE. You can see what that looks like (and a few more things we did with the Leap motion at JPL) in the video below.

We actually even drove the real robot this way, live from a stage at the Game Developers Conference.